A turning point for Rafael Yuste, a neuroscientist at New York’s Columbia University, came when his lab discovered it could activate a few neurons in a mouse’s visual cortex and make it hallucinate.

The mouse had been trained to lick at a water spout every time it saw two vertical bars, and researchers were able to prompt it to drink even with no bars in sight, said Yuste, whose team published a study on the experiment in 2019.

“We could make the animal see something it didn’t see, as if it were a puppet,” he told the Thomson Reuters Foundation in a phone interview. “If we can do this today with an animal, we can do it tomorrow with a human for sure.”

Yuste is part of a group of scientists and lawmakers, stretching from Switzerland to Chile, who are working to rein in the potential abuses of neuroscience by companies from tech giants to wearable startups.

Following his team’s discovery, he launched the NeuroRights Initiative, which advocates five “neuro-rights” to protect how a person’s brain data is accessed and used, including a right to mental privacy and to free will.

“Right now, it’s the wild west,” Yuste said.

In Chile, senate member Guido Girardi is pushing to translate those principles into law, with a bill that would give legal protection to a suit of neuro-rights, and a complementary reform to the country’s constitution.

This month, the National Commission for Scientific and Technological Research began debating Girardi’s proposal, which got unanimous support from parliament in December 2020.

His office hopes the bill will be adopted later in the year.

“If this technology is industrialized without the proper regulations and rules, it will threaten fundamental human autonomy,” he said in a phone interview.

Meanwhile, the Organisation for Economic Co-operation and Development (OECD) has issued its own neurotechnology guidelines, noted Marcello Ienca, a researcher at ETH Zurich’s Health Ethics and Policy Lab, who works on the OECD project.

“Usually people only start talking about ethics and regulations after a big scandal, but with neurotech I hope we can take on these questions before that scandal,” he said.

If we allow for all this brain data to be taken, who knows what the consequences will be?

‘Science-Fiction’ Scenarios

Advances in brain science like those made by Yuste’s team have made it possible to penetrate the brain using censors and implants and access some degree of neural activity.

The U.S. Food and Drug Administration has approved deep brain stimulation procedures – implanting electrodes in the brain – to treat a range of disorders from Parkinson’s disease to epilepsy.

And major tech firms, from Facebook to Tesla, are working on “computer-brain” interfaces to allow consumers to control devices with their thoughts, while some smaller companies sell wearable devices to monitor brain activity.

But warnings of “science-fiction scenarios” of for-profit mind control are overblown for a line of research that is still so young, said Karen Rommelfanger, director of the neuroethics program at Emory University in Atlanta.

“Yes, the science will get better, not worse,” she said. “But exactly how it develops is up in the air.”

Ienca at ETH Zurich said major ethical issues could arise if the data commercial neurotech devices collect is widely shared and analyzed without proper safeguards, he said.

“We already have digital biomarkers that can indicate if someone is predisposed to developing dementia. Let’s say (that) data is shared with a prospective employer, you could face discrimination on the job market,” he said.

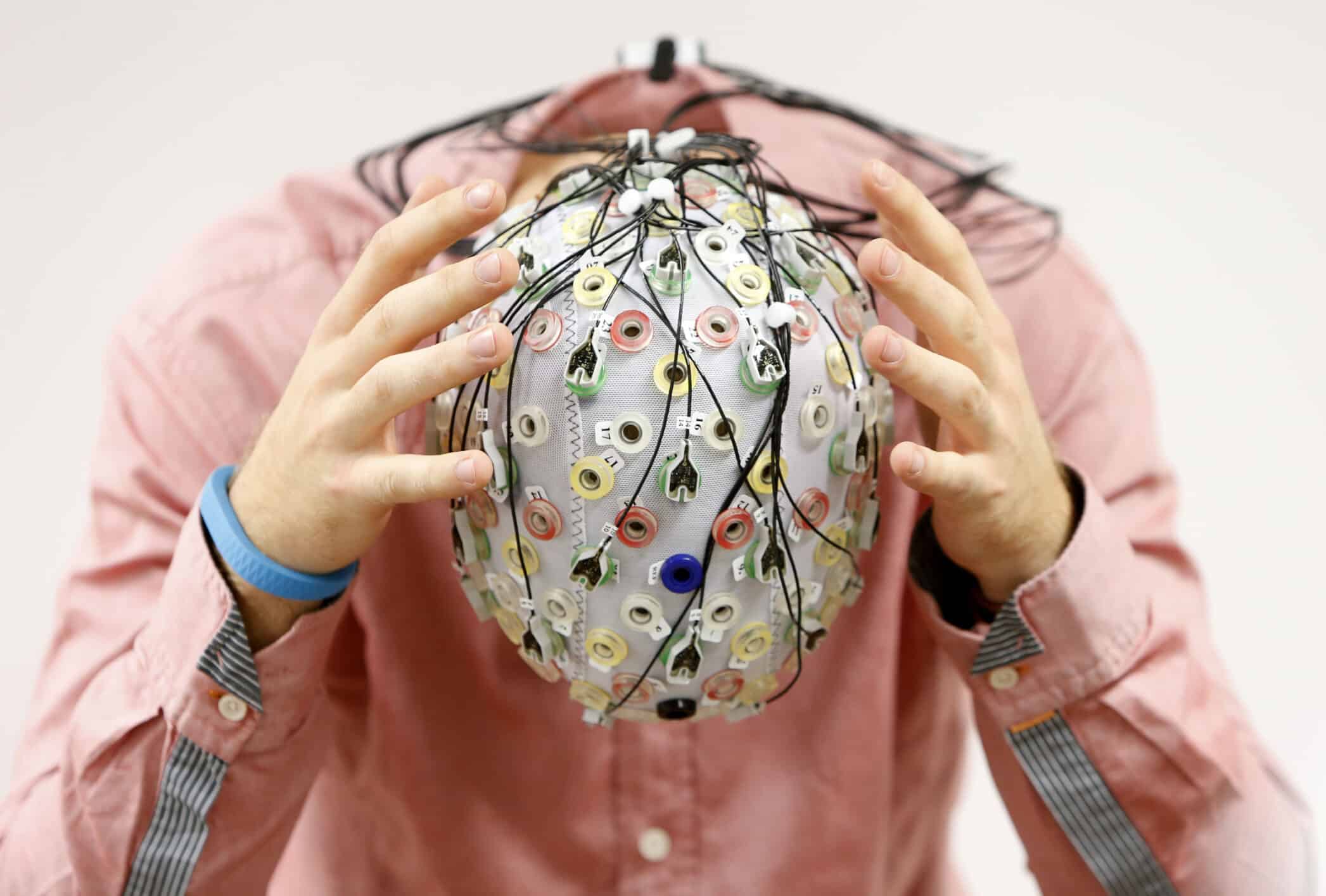

In 2018, Ienca published a review of six commercially available “neuromonitoring” headsets in the journal Nature Biotechnology.

He found that the electroencephalography (EEG) data gathered by the devices as they measure electrical activity in the brain could be leaked online, sold to third parties, or subjected to uses that consumers did not consent to.

This also concerns Adam Molnar, the co-founder of neurotech start-up Neurable, which is developing headphones that measure EEG to help users track brain activity and emotions like burnout.

When Neurable launches a new device, he said, it pledges not to sell user data, and only uses collected data to improve its own products.

“We want to be the good guys,” he said, adding that he hopes the move will help set the tone for other neurotech firms.

Right now, it’s the wild west

Data harvesting

Rommelfanger at Emory is wary of moving too quickly to regulate brain tech, which she said could stifle innovation.

She recommends direct engagement with startups working on commercial devices, encouraging them to develop privacy-conscious and ethically-minded products.

Girardi favors strict regulation. “We didn’t regulate the big social media and internet platforms in time, and it costs us. We have lost control of all kinds of data, from our location to our romantic interests – it’s all up for sale,” he said.

“My proposals would give the status of your mind data the same as your organs, like your heart,” he added. “No one can interfere with it.”

“If we allow for all this brain data to be taken, who knows what the consequences will be? We’ll have algorithms deciding what it means to be ‘happy’,” Girardi said.

Tim Brown, a neuroethics expert at the University of Washington, said the data currently being collected is not powerful enough to do that.

“A lot of that brain data is basically noise,” he said.

But, he noted, scientists are working on algorithms to decode and analyze the data gleaned from EEG and functional magnetic resonance imaging (fMRI) scans, hoping to build computer models that can interpret an individual’s mental state.

He predicted the same dynamics present in the social media or search industry – where companies offer a service for free in return for permission to harvest user data – will likely surface in neurotech.

That could lead to serious consequences for privacy in the coming years, Brown warned, with companies linking users’ social media behavior to their brain images in real time to craft ads or other messages.

He also worries about how neurotechnology might exacerbate existing patterns of discrimination and racism.

In his research, he has warned of the possibility of “mandatory neurointerventions,” when institutions like schools or prisons might deploy neurotechnology to assess mental states.

“Are we going to see a situation where prisoners are asked to put their heads in a box, and they are scanned to see if they are eligible for parole based on an algorithmic interpretation of their brain?,” Brown asked.

“What will the impact of that be on Black and brown people, who we know are already disproportionately (represented) in these institutions.”

Yuste says policymakers around the world need to start contemplating these issues now.

He has been in touch with members of President Joe Biden’s administration and the United Nations about neuro-rights and related issues.

“It’s not about patching the law,” he said. “These technologies affect the core of what it means to be human – the only way to tackle this is with new human rights.”